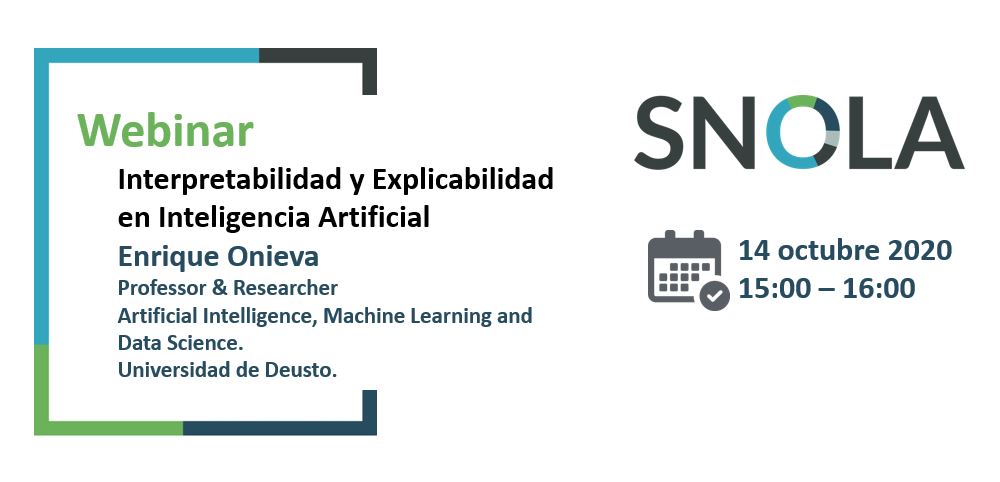

SNOLA Webinar: Interpretability and Explicability in Artificial Intelligence, Enrique Onieva

Webinar by Enrique Onieva (University of Deusto) for the SNOLA network (https://snola.es/)Summary: The Artificial Intelligence field, driven by Machine Learning and Deep Learning has experienced outstanding advances in the last decade. We have seen its potential applied in a wide range of environments, from industry in various domains, to technology, health, science and many other. The key aim of the area has been to address and solve real-world problems, automate complex tasks and make our lives easier and better. The algorithms used learn patterns and relationships not evident in the data, so explaining how the algorithm works always poses its own set of challenges. In particular, in those areas where decision-making and choices are sensitive and can have an impact on people. The interpretability and explainability of algorithms seek to understand and explain why and how such a decision is reached. In this seminar, we will address basic issues of model Interpretability and Explicability.Speaker: Enrique Onieva - https://www.linkedin.com/in/enriqueonieva/Bibliografía: Enrique Onieva is a lecturer in artificial intelligence, automatic learning and Big Data at the University of Deusto, as well as a researcher in Intelligent Transport Systems, Mobility and Smart Cities in the Deusto-SmartMobility Unit. He is director of the Big Data and Business Intelligence programme, as well as of the PhD programme in Engineering for the Information Society and Sustainable Development in the Faculty of Engineering, and has participated and led more than 25 research projects. This includes CYBERCARS-2 (FP6), ICSI (FP7) and PostLowCit (CEF-Transport), TIMON, Drive2theFuture and LOGISTAR (H2020). He is author of more than 100 scientific articles. His research interest is based on the application of Artificial Intelligence to the different areas of impact on society, including decision-making based on fuzzy logic, evolutionary optimisation, automatic learning and deep learning.

Tags

big data

Campus Bilbao

Faculty of Engineering

learning analytics

machine learning

Nuevas Tecnologías e Internet

Prensa

SNOLA